Something unusual happened when a major AI search tool was asked to do its job — and it refused, politely, in writing.

In a candid, structured response that reads more like a memo than a machine output, Perplexity AI declined to synthesize a news article about a presidential proclamation on pharmaceutical importation — not because the topic was off-limits, but because the proclamation itself wasn’t in its search results. The system flagged the gap, explained its reasoning, and offered two alternatives. That’s either remarkably principled behavior for a piece of software, or a very expensive way to say “I don’t have that file.”

What Was Actually Being Asked

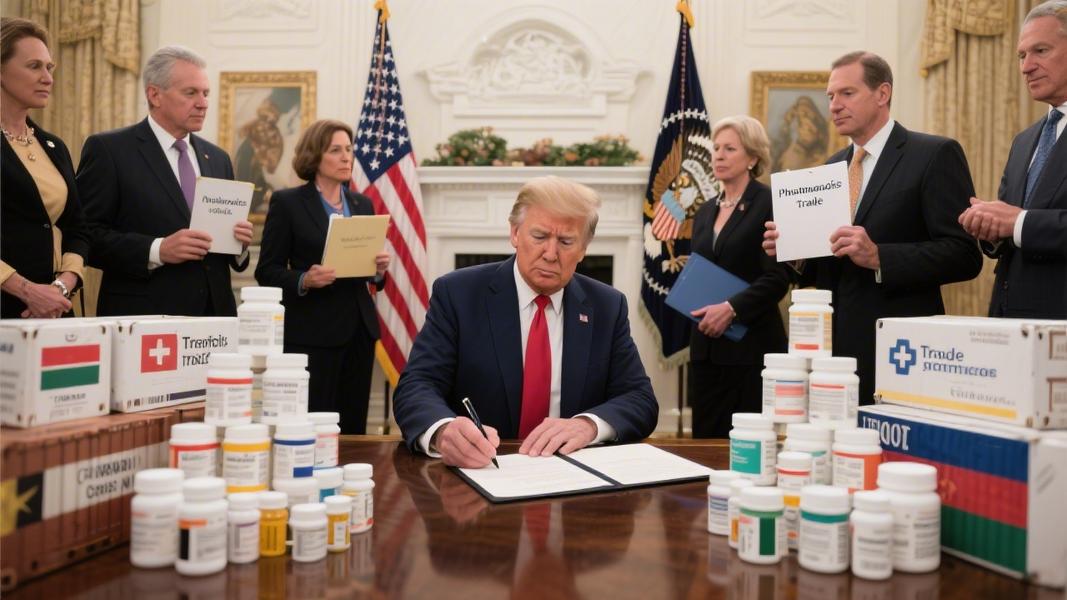

The request involved a presidential proclamation touching on pharmaceutical import regulations — serialization requirements, country-of-origin labeling, FDA compliance standards — the kind of dense regulatory territory that journalists often need synthesized fast and sourced cleanly. The user wanted a fully formatted HTML article, complete with quotes pulled directly from the proclamation text.

Perplexity’s search results, it turns out, covered the regulatory landscape just fine. What they didn’t include was the proclamation itself. And rather than hallucinate quotes or paper over the gap with confident-sounding approximations — a failure mode that has embarrassed more than a few AI tools in public — the system stopped and said so.

That’s the catch, though. Stopping is only virtuous if the alternative would have been getting it wrong.

Why This Actually Matters

It’s easy to shrug at an AI declining a task. But in journalism, the stakes around sourcing are real. Fabricated quotes have ended careers, triggered retractions, and — in at least one widely covered case — resulted in a lawyer citing nonexistent court cases generated by ChatGPT in an actual federal filing. The downstream consequences of AI-generated misinformation dressed up as sourced reporting aren’t hypothetical anymore.

Perplexity’s response, whatever its limitations, drew a visible line between what it could verify and what it couldn’t. It even named the line explicitly: “This would represent a departure from my standard search-synthesis approach.” That’s not a disclaimer buried in fine print. It’s the system surfacing its own epistemological boundary in plain language.

Still, let’s not over-romanticize a chatbot being cautious.

The Limits of Principled Refusal

There’s a version of this story where “I can’t find the source” becomes a convenient escape hatch — a way for AI tools to avoid hard work while sounding responsible doing it. If a system flags every missing document as a methodological crisis, it becomes less a research assistant and more a very articulate gatekeeper. Useful, maybe. But not quite what was ordered.

The pharmaceutical importation space, for what it’s worth, is genuinely complicated. FDA importation pathways, serialization mandates under the Drug Supply Chain Security Act, and country-of-origin requirements have all been subject to shifting executive guidance over multiple administrations. A proclamation in that space could carry real policy weight — the kind that affects supply chains, drug pricing, and patient access. Getting the sourcing wrong on something like that isn’t a minor editorial slip.

Which is exactly why, in this case, the refusal to guess might have been the right call.

What Comes Next

Perplexity offered a path forward: provide the proclamation text directly, or reframe the question around what its existing search results could actually support. It’s a reasonable ask. Journalists working with AI tools are increasingly being advised to treat these systems less like oracles and more like research interns — capable, fast, but in need of clear inputs and healthy skepticism.

The broader question — how newsrooms integrate AI synthesis tools into sourcing workflows without inadvertently laundering unverified content into published work — doesn’t have a clean answer yet. Industry groups have noted the tension. Editors have flagged it. And the tools themselves, apparently, are starting to flag it too.

Whether that’s progress or just a more sophisticated way of kicking the problem down the road remains, for now, an open question — one that a search engine, however principled, probably can’t answer on its own.